The Core Distinction: Building AI Products vs. Building AI Models

Most coverage of AI focuses on model research: training large neural networks, designing new architectures, and pushing benchmark scores. That task is something only a handful of research labs worldwide can afford. Generative AI development is something different from training massive models like GPT, Gemini or Claude from scratch and far more accessible.

Generative AI developers take pre-built models and wire them into applications that solve real problems. The focus is on product quality, reliability, and user experience, not on the underlying neural network parameters.

This distinction matters because companies don’t need to hire a PhD in machine learning. They need developers with solid software engineering fundamentals, an understanding of how language models behave, and the ability to design systems around their strengths and limitations.

Why It Matters Now

Generative AI has made creating text, code, images, and data massively cheaper and faster - what used to take hours or days can now be done in minutes. Every software product that involves content creation, information retrieval, or user interaction is now a candidate for AI augmentation. The developers who understand how to build reliably with these models, not just demo them, will define the next generation of software products.

Generative AI development is, at its core, a craft: it rewards iteration, careful observation, and deep understanding of how models behave in the wild. It is early enough that the best practices are still being written, which means the barrier to making a meaningful contribution is lower than in almost any other area of software engineering.

The New Engineering Challenges

Generative AI development introduces challenges that traditional software engineering has no established playbook for yet.

- Non-determinism: The same input can produce different outputs on different runs, which makes debugging and testing fundamentally harder.

- Context window management: Models have a fixed context limit; managing what information to include and what to leave out is a critical design constraint.

- Hallucinations: Models can generate confident, plausible-sounding content that is factually wrong. Production systems must be designed to mitigate or detect this.

- Latency and cost: API calls to large models are slow and expensive compared to traditional database queries. Caching, streaming, and model selection all become cost-engineering concerns.

- Safety and alignment: Ensuring the model does not produce harmful, offensive, or off-brand output at scale requires both prompting strategies and runtime guardrails.

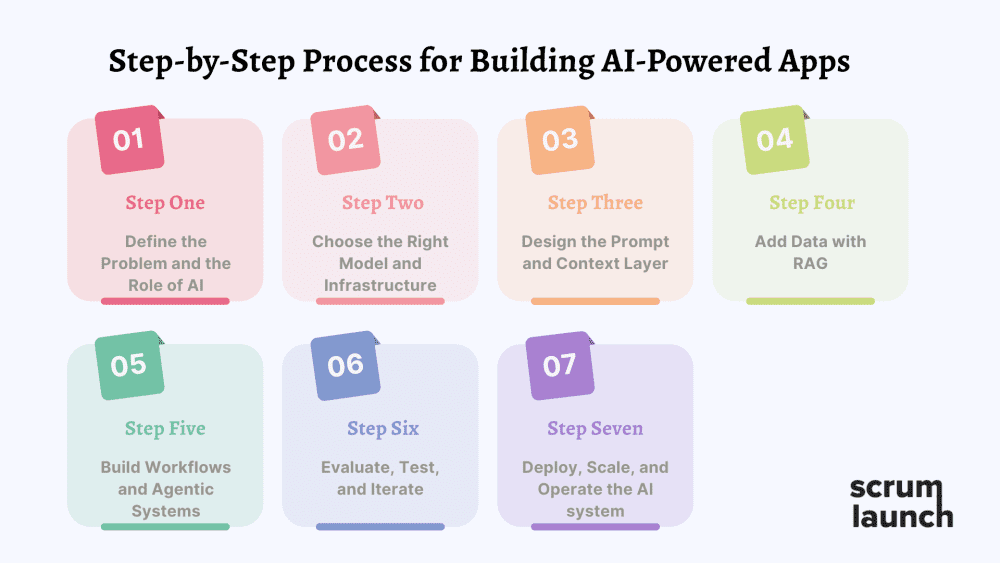

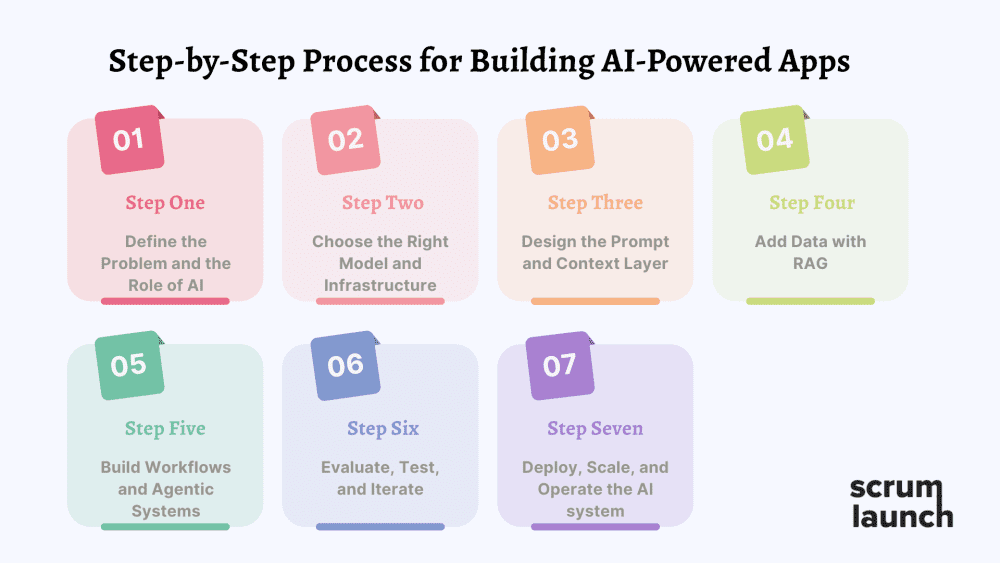

How AI-Powered Applications Are Built: A Step-by-Step Process

Building a generative AI application that solves a real problem is not a single act - it is a structured process combining product thinking, systems design, and iterative experimentation.

Below we describe how that process usually looks step by step.

Step 1: Define the Problem and the Role of AI

Not every problem benefits from generative AI. So start with identifying a clear pain point where gen AI adds unique value. For example, AI can be extremely effective for tasks such as summarizing internal documentation, generating personalized emails, assisting with code reviews, or answering customer support questions. In contrast, deterministic systems like payment processing or transaction validation rarely benefit from generative models.

Once the problem is validated, developers determine what role AI should play in the system.

Map exactly what the AI must do: generate text in brand voice? Reason over documents + images? Execute actions like querying databases or sending emails? After defining the expected outputs assess how those AI-generated outputs will fit into the actual software or service you’re building.

Developers usually start by quickly testing the idea directly with frontier models, often through chat interfaces like ChatGPT or Claude. At this stage, experimentation with prompts and simple workflows helps determine whether the model can reliably solve the task and validate the idea with 10–20 real users or internal stakeholders before investing.

Step 2: Choose the Right Model and Infrastructure

Model selection is one of the most important architectural decisions. Different models have different strengths -some perform better at structured reasoning, others at long-document summarization or code generation. Developers evaluate several factors when selecting a model:

- output quality and reasoning ability

- latency and response speed

- cost per token or inference

- context window size

- privacy and data-handling requirements.

Developers must also decide how the model will be hosted. The simplest option is to use a hosted model provided by an AI company. Models from providers like OpenAI, Anthropic, or Google run on their infrastructure, and your application interacts with them through an API. Another option is to deploy an open-weight model such as Llama, Mistral, or Gwen on your own infrastructure. In this case, the model runs on servers you control, typically using GPU hardware. Instead of paying per request, the main cost comes from running and maintaining the necessary infrastructure. This approach provides more control over privacy, customization, and long-term costs, but it also requires powerful hardware and engineering effort. That’s why this setup is common for large companies, highly regulated industries and very high traffic apps. A third option is to access open models through a hosted inference service. In this setup, another company runs the infrastructure while providing API access to open models. This approach combines the convenience of APIs with the flexibility of open models, allowing developers to avoid managing their own hardware.

Most organizations combine multiple models rather than relying on a single provider. A powerful reasoning model might handle complex tasks, while a smaller and faster model processes high-volume requests. As of March 2026, frontier leaders include Gemini 3.1 Pro (speed/context), Claude 4.5/4.6 (reasoning/safety), GPT-5 variants (agentic routing), Grok 4 (real-time/unfiltered), and open standouts like DeepSeek V3.2/ Mistral 3/ Qwen 3.5 / Llama 4.

Step 3: Design the Prompt and Context Layer

In generative AI applications, the prompt is the primary way your app communicates with the model. It’s not just the user’s question - it’s a structured input that combines developer instructions, dynamic user input, and any contextual data the system provides. The developer defines the model’s role, tone, behavior, and output format, while the user provides the specific request, and context such as documents, databases, or conversation history is added to ensure accurate responses.

Careful prompt design is critical. Small changes in wording, context placement, or output instructions can dramatically affect results. Modern developers often call this context engineering, since production systems rely on including the right context in the right way to guide model behavior reliably. The goal is to make outputs consistent, useful, and properly formatted before they reach the user.

Developers also adjust model parameters like temperature, max tokens, and top-p, also known as nucleus sampling, to control output creativity and consistency, and in some cases, fine-tune models on small, task-specific datasets to achieve the desired behavior when prompts alone aren’t enough. For example, when a specific tone, domain vocabulary, or formatting style is required, developers may apply fine-tuning techniques such as LoRA or QLoRA to adapt a base model using smaller domain-specific datasets.

Step 4: Add Data with RAG

Most real-world AI applications rely on information that was not part of the model’s original training data, such as internal documents, product knowledge bases, or real-time information. To make this data accessible, developers build a retrieval pipeline, most commonly using Retrieval-Augmented Generation (RAG). This pipeline combines language models with external data sources.

In a typical RAG system, documents are first ingested from sources such as databases, internal files, or web content. These documents are then split into smaller segments, or chunks, which are converted into vector embeddings and stored in vector databases such as Pinecone, Weaviate, Qdrant, or PostgreSQL with pgvector.

When a user submits a query, the system searches the vector database to retrieve the most semantically relevant chunks and injects them as context into the model prompt. This allows the model to generate responses grounded in current or proprietary data rather than relying only on its training.

Developers carefully tune the retrieval pipeline to improve accuracy. Important factors include chunk size, metadata filtering, ranking or re-ranking models, and hybrid search techniques that combine semantic and keyword retrieval. These design choices significantly affect system performance. If the retrieval layer returns irrelevant information, even the most capable language model will generate incorrect answers.

Step 5: Build Workflows and Agentic Systems

Some applications require more than generating text - AI can retrieve real data, perform calculations, and trigger actions. In these cases, developers design agentic systems where the model can call external tools such as search functions, database queries, code executors, email senders, or third-party APIs.

More advanced applications implement agentic workflows, where the model plans and executes multiple steps to complete a task. The model decides which tool to use and in what sequence, turning a single user request into a multi-step workflow. For example, the AI may first gather information, then analyze it, and finally generate a response. Patterns such as plan–execute–verify or ReAct (Reasoning and Acting) help structure these multi-step processes.

Developers often rely on frameworks like LangGraph, CrewAI, or AutoGen to build and manage these workflows. These tools help orchestrate agents, handle tool calls, and maintain conversation state across interactions. In many applications, systems also implement memory mechanisms to preserve context during longer conversations or complex tasks.

Step 6: Evaluate, Test, and Iterate

Testing generative AI systems is fundamentally different from testing traditional software. In conventional software, bugs are deterministic and easy to reproduce. AI systems, however, produce probabilistic outputs, meaning the same input can generate slightly different results each time, which makes conventional unit tests insufficient.

To maintain quality, developers build evaluation frameworks that include curated test inputs paired with expected outcomes. These evaluation suites help detect regressions when prompts, models, or retrieval pipelines change. The goal is to replace subjective impressions with measurable signals that track quality over time.

Common evaluation techniques include human review of sampled outputs, automated model-graded evaluation where a second AI scores the primary model's responses, and golden-set benchmarks against known correct answers. In addition, teams perform human red-teaming, where testers intentionally probe the system with challenging prompts to uncover edge cases, biases, prompt injections, or attempts to bypass safety constraints.

Developers also rely on observability and tracing tools, such as LangSmith, Phoenix, or Helicone, to monitor prompts, responses, tool calls, latency, and token usage. These tools make it easier to debug issues and continuously improve AI systems in production.

Step 7: Deploy, Scale, and Operate the AI system

Once an AI application moves into production, developers must ensure it remains reliable, safe, and cost-efficient under real user traffic. Production systems typically include guardrails that filter harmful, off-topic, or policy-violating outputs before they reach users. Input validation, output classifiers, and rate limiting help prevent abuse and keep responses aligned with application guidelines.

Observability is equally critical. Developers instrument their pipelines to log prompts, responses, tool calls, latency, and cost per request. These logs serve as the primary diagnostic tool when users report issues, and over time they become valuable data for building new evaluation sets or fine-tuning datasets.

Scalability and cost optimization are also key considerations. Efficient deployments often rely on optimized inference engines such as vLLM or TensorRT-LLM, model compression techniques like quantization, caching strategies, batch inference, and autoscaling infrastructure to handle varying workloads while controlling costs. Finally, production AI systems are continuously improved through feedback loops. Developers monitor usage patterns, collect user feedback, run A/B tests on prompts or models, and adapt systems as new models, techniques, and research become available.

Conclusion

Generative AI development isn’t about reinventing the wheel - or the massive models powering it. It’s about taking those wheels and building vehicles that actually get you somewhere: reliable, user-focused products that solve real problems in 2026 and beyond. That’s where the opportunity is in 2026. This field is still young. The tools are changing, best practices are being written as we go, and the gap between a flashy demo and a production-ready system is huge. But that's exactly what makes it one of the most exciting disciplines in software engineering today. Developers who learn to navigate that gap - who understand not just how to prompt a model, but how to build reliable systems around it will define the next decade of generative AI development.