Anthropic positions Claude as an AI users can trust with complex inputs, sensitive data, and long-running workflows, without constantly fighting hallucinations, tone drift, or brittle behavior. It's also good at distinguishing facts from assumptions and rarely "invents" anything that isn't in the source, which is critical for legal, financial, and scientific materials.

Claude is also built with an API-first, platform-agnostic approach. It doesn’t assume you’re working inside a specific ecosystem. You can integrate it into custom workflows, internal tools, or deploy it through services like Amazon Bedrock. In many organizations, the limiting factor isn’t capability - it’s whether a tool can fit into existing infrastructure without creating security, compliance, or operational issues. Claude is designed to fit into those environments.

In 2025, Anthropic significantly expanded Claude's functionality: it now supports working with files, images, tables, and presentations. It can memorize dialogue and adapt to the user's style. In 2026, it added Claude Skills, allowing the system to apply saved rules for particular tasks.

Claude Models

Anthropic has implemented three main versions of Claude: Opus, Sonnet, and Haiku, available in several generations, but the most current models are the 4.6 family, released at the beginning of 2026.

Claude Opus 4.6 – deep reasoning & complex tasks

Released in beta on February 5, 2026, Opus 4.6 is one of Anthropic’s most capable models so far. It offers a 1 million token context window, improved coding, and enhanced reasoning. It is designed for complex tasks where accuracy is critical: deep data analysis, complex system architecture, creative projects requiring out-of-the-box thinking, long-term planning, and agent-based workflows.

Claude Sonnet 4.6 – balanced, everyday professional work

Released on February 17, 2026, Sonnet 4.6 excels at writing articles, analyzing data, generating complex code, and solving logic problems. For users working with content, marketing, or business process automation, Sonnet 4.6 is a go-to tool that handles the majority of tasks at a professional level.

Claude Haiku 4.5 – speed & cost

Claude Haiku has not been upgraded yet, and the latest version is 4.5, released in October 2025. It is the fastest and most economical model in the current line, also suitable for startups and freelancers on a budget. It’s ideal for speed-critical tasks, like when you need to process thousands of customer inquiries or create simple product descriptions for an online store.

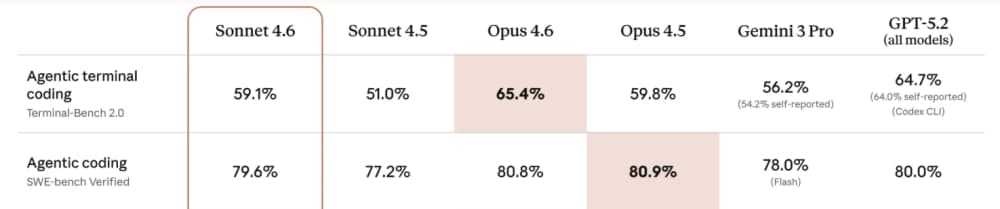

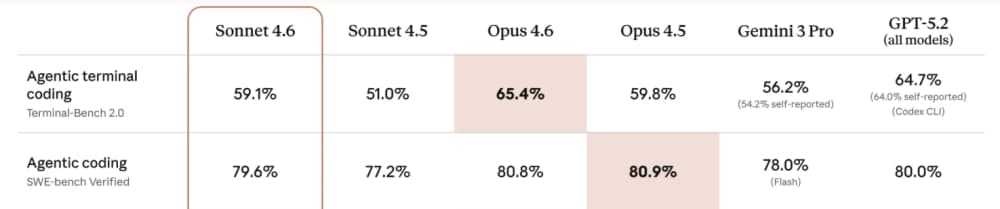

Claude is particularly valued for its strong coding capabilities. Indeed, Claude's main advantages are its clean code and its ability to understand the context of large projects (entire repositories). Claude Opus 4.6 achieved 80.8% on SWE-bench Verified, while Claude Sonnet 4.6 achieved 79.6%, outperforming GPT-5.2 and Gemini 3 Pro. The previous model, Opus 4.5, scored even higher - 80.9%.

Claude’s Core Features:

Safely-first design

Most AI systems handle safety as a layer on top - filters applied after the model generates an answer. Claude takes a different approach. It is built using Constitutional AI - a training methodology developed by Anthropic. The model learns not only from large volumes of data but also from a defined set of principles (a "constitution") that shapes its behavior during training. This approach makes Claude's outputs more predictable, reduces the risk of toxic or inappropriate content, and improves the explainability of its responses.

Large Context window

Claude Opus 4.6 and Sonnet 4.6 both support a 1 million token context window, now generally available at standard pricing - no long-context surcharge, no special configuration required. In practical terms, this means Claude can process thousands of pages of documentation, an entire codebase, or a long chain of reasoning in a single request without losing coherence. For teams working with contracts, technical specs, research papers, or large codebases, this removes the need to chunk content manually and stitch responses back together, which is where context loss typically happens.

Artifacts – Results Visualization

The Artifacts feature turns Claude into a true AI assistant for 2026. When Claude generates something - code, a UI component, a diagram - it doesn’t just return it as text. It renders it in a separate interactive window where users can see the output immediately, edit it in place, iterate without restarting the workflow, and even export it. It is especially useful for those using Claude for no-code development or prototyping without programming skills. Artifacts serves as an interactive sandbox where you can test code, visualize data, and create web elements in real time. It effectively turns Claude from a text generator into a lightweight development

environment.

Where Claude Excels

Claude excels at maintaining quality and consistency, coding assistance (especially for complex refactoring), long-context reasoning, structured analytical thinking, and any work where getting the answer right matters more than getting it fast. If you feed it a 500-page technical spec or a messy legacy codebase, it doesn't lose the thread halfway through. It reads and processes it as a coherent whole, holds the context, and responds like a calm and careful editor who actually finished the document, not like a tool guessing at what you meant. That same quality shows up in writing. Long-form technical documentation, structured reports - Claude maintains consistency in a way that most models don't at scale. The trade-offs are real, too. There's no native real-time web access and there are few out-of-the-box integrations with productivity tools.

What is Gemini?

Gemini is Google's answer to AI assistants. It is a family of highly capable, multimodal large language models developed by the combined forces of Google Brain and DeepMind. Launched as Google’s most ambitious AI project to date, Gemini was built from the ground up to be natively multimodal. Unlike models that added vision capabilities later, Gemini was trained to reason across multiple modalities at once, which shows up in how naturally it handles tasks like analyzing charts or extracting data from screenshots, meaning it doesn’t just process text, but understand, operate across, and combine different types of information, including code, audio, image, and video.

Gemini prioritizes access to real-time information and focuses on three core pillars: multimodality (reasoning across complex visual and auditory data), scalability and efficiency (combining intelligence and lack of latency), as well as safety and responsibility (avoiding unfair bias, and maintaining high standards of factual accuracy).

Google's goal with Gemini goes beyond creating a standalone assistant. The model serves as an AI layer embedded across Google Search, Google Workspace (Gmail, Docs, Sheets, Slides), Android, and Google Cloud. This integration means Gemini can pull real-time information from Google Search, access your calendar, or draft emails based on context from your inbox, all in the same conversation. So, Gemini slots in naturally if you're already using Gmail, Google Docs, and Google Cloud. But if you're not, you'll miss some of its most useful features.

Gemini is built for users who need an AI that can switch between analyzing an image, searching the web for current data, and generating a video concept, all in one conversation. By integrating deeply with the world's most used productivity tools and search infrastructure, Gemini aims to transition AI from a conversational novelty into a powerful, ubiquitous collaborator for everyone.

Gemini Models:

Gemini 3.1 Pro - advanced reasoning & complex multimodal tasks

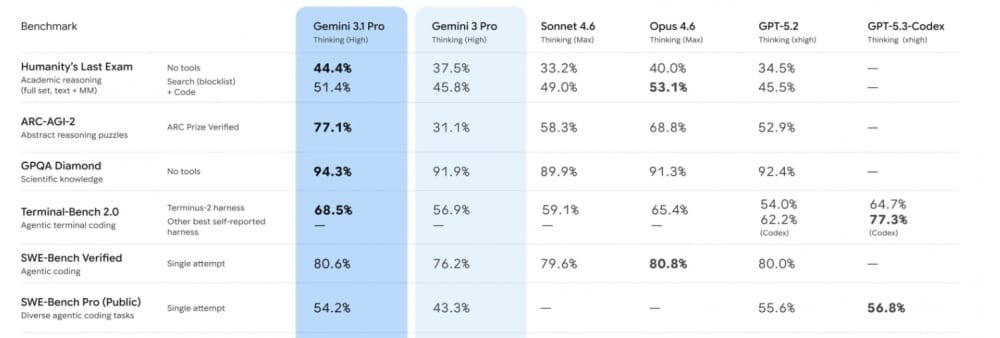

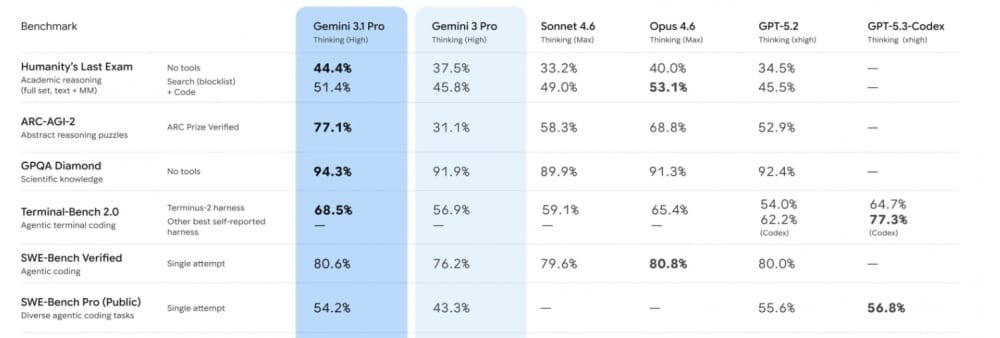

Gemini 3.1 Pro is a flagship "advanced thinking" model launched on February 19, 2026. It is designed for the most complex cognitive tasks, such as deep research, multi-step planning, and "vibe coding" (high-level software architecture). Like the previous model, Gemini 3 Pro, it has a 1M+ token context window. It is more than enough to load an entire code repository, an hour-long 4K video, or a hundred-page PDF document into the prompt without breaking it into pieces. The main highlight is the model's result on the ARC-AGI-2 test, which assesses the ability to solve completely new visual-logical problems, at 77.1%. By comparison, the Google’s previous model scored only 31.1%.

Gemini 3.1 Flash-Lite: speed & high-volume efficiency

Released on March 3, 2026, this is an ultra-efficient "workhorse" of the family when response time, throughput, mass processing, and cost are critical. It is built for high-frequency, low-latency tasks such as classification, translation, request routing, and simple processing of large data streams, where cost is the primary concern. Google documentation describes it as a model with performance similar to more powerful systems (comparable to Pro models), but at a lower cost and optimized for speed and cost-efficiency.

Gemini 3.1 Flash Live: real-time voice & audio interaction

Launched less than two weeks ago - on March 26, 2026, this is a specialized "Flash" model specifically for the Live API. It is an audio-to-audio (A2A) model designed for real-time, voice-first interactions where low latency and natural conversation flow are the top priorities.

Gemini Core Features:

Native multimodal input

Gemini was built from the ground up to be natively multimodal. For use cases like analyzing a recorded meeting alongside its slide deck, or auditing a video walkthrough against written documentation, this removes the need for separate processing pipelines. For a business, this translates to "universal context" - users can upload a video of a technical keynote, a 500-page PDF manual, and a zip file of source code, and ask Gemini to find a specific UI bug mentioned in the video and fix it in the code. Gemini 3.1 Pro, specifically, can process up to 900 images per prompt, ~8.4 hours of audio, and up to 1 hour of video (or ~45 minutes with audio), while supporting PDFs up to 900 pages per file, without format conversion.

Long-Context Understanding with Context Caching

Gemini features a 1-million-token context window as a standard foundation. While other models are just reaching the 1M mark, Gemini has optimized this with Context Caching - a feature that lets organizations upload large reference materials (company knowledge base, codebase, policy documents, etc.) once and reuse them across many queries. For organizations, this is a game-changer: instead of resending the full context and paying to "re-read" that data every time, the model keeps it in active memory. It results in near-instant responses and up to a 90% reduction in costs for long-context tasks.

Seamless Google Ecosystem & Workspace Integration

One of Gemini’s biggest practical advantages is its deep embedding into Google’s products. It works natively with Google Workspace (Gmail, Docs, Sheets, Slides, Drive), letting users summarize long email threads, generate full presentations or spreadsheets from files, pull data directly from Drive, or enhance meetings in Google Meet with real-time insights. On mobile and desktop, Gemini can act as a system-level assistant that understands screen context across apps. This tight ecosystem integration makes it particularly valuable for teams and enterprises already working in a Google-centric environment, turning scattered tools into a unified AI-powered workflow.

Where Gemini Excels

Gemini excels at real-time information retrieval, multimodal tasks (images, video, audio), research aggregation from multiple sources, and productivity integration within Google's tools. Thanks to its tight integration with the Google ecosystem, Gemini knows about your calendar, can access your Drive files, and works inside the productivity tools you're already using. It’s more like a tool for solving urgent problems than a conversational partner. It's perfect for short queries like "do this," "calculate this," or "explain this briefly." Gemini is also particularly effective for tasks where data up-to-dateness and working with sources are crucial. Where it struggles is the same place most fast tools struggle: long, sustained analytical work. It is optimized for speed and retrieval rather than sustained generative reasoning, which can show up as inconsistency in tone or argument structure across very long analytical writing.

Claude vs. Gemini Side-by-Side Comparison

Development Differences

Claude was developed with safety as a foundational priority. Instead of relying solely on human feedback, Anthropic uses Constitutional AI - a unique approach where the model is guided by a written set of principles (“constitution”). It learns to critique and refine its own outputs according to these rules. This approach results in a model that tends to be more balanced, more cautious, and less likely to produce misleading or harmful answers than other AIs, which tend to sound more confident.

Gemini follows a more conventional frontier-model training path. It uses large-scale pre-training on massive multimodal datasets (text, images, audio, video, and code), followed by supervised fine-tuning and Reinforcement Learning from Human Feedback (RLHF) - the same core technique used by most modern LLMs like ChatGPT. Google also heavily relies on its own real-world data and grounding in Google Search for more up-to-date and factual responses. It also adds content filters tailored to Google’s ecosystem and policies designed to reduce harmful or biased outputs.

Programming

Claude Opus 4.6 currently leads in coding benchmarks, scoring 80.8% on SWE-Bench Verified. Claude Opus 4.5 scores even higher - 80.9%. Claude is particularly good at big, complex coding projects that involve many files and many steps, for instance, when sustained reasoning across multiple files is required, such as refactoring legacy code or debugging subtle logic errors. It can maintain focus over long sessions, understand the project's overall structure, and even turn vague requests into good code. Developers say it feels like it “gets what I really want” better than other AIs.

But Gemini 3.1 Pro is only 0.02% behind, hitting 80.6% on SWE-Bench Verified, which makes it strong for code generation and explanation. It performs well when paired with Google’s own developer tools (such as Vertex AI, Cloud Run, Cloud Workstations, etc.). But when the coding task becomes large, complex, and requires many iterative changes across a big project (true software engineering work), Claude currently handles it better - it stays more consistent, makes fewer mistakes over long sessions, and better understands the “big picture” of the codebase.

Image and Video Analysis and Generation

When it comes to multimodality, Claude and Gemini take noticeably different approaches.

Claude excels at image analysis and visual reasoning. It can accurately describe images, extract text, interpret charts and diagrams, and understand screenshots or visual data within documents. Through its Artifacts feature, Claude can also generate and iteratively refine code-based visuals such as diagrams, interactive charts, SVGs, and simple web interfaces directly in a live preview pane. However, it does not natively generate images or support video creation or editing.

Gemini, being natively multimodal, can process and reason over images, audio, and video simultaneously - for example, analyzing a recorded meeting while cross-referencing its slides and documentation. Gemini also supports image and video generation, allowing users to create images, short videos, or image-to-video content from text prompts. It makes Gemini particularly strong for creative workflows, multimedia content creation, visual data exploration, and tasks that combine multiple media types in one conversation.

Text Processing and Generation

Both models offer a 1-million-token context window, allowing them to handle massive inputs such as entire code repositories, long research corpora, or hundreds of pages of documentation in a single session.

Claude consistently produces well-structured, coherent, and high-quality long-form writing. It maintains tone, style, and logical flow across thousands of words, making it ideal for documentation, technical reports, in-depth articles, and educational content. The model rarely loses coherence in complex explanations. It also writes more like a human - warmly, intuitively, with an eye for emotion, more accurately conveying the desired style and tone of the text.

Gemini 3.1 Pro performs strongly on shorter-to-medium content, summaries, and responses that benefit from real-time information or multimodal inputs. However, it can sometimes feel less consistent than Claude when maintaining a specific voice or structure across very long narratives.

Research and information access

Claude relies primarily on its training data (knowledge cutoff around early 2025). It has an optional web search feature that users must manually enable per chat. Developers also have to explicitly enable a web search tool (and a related web fetch tool) when making API calls. But Claude excels at deep synthesis and analysis of documents provided by the users. It shines when processing lengthy PDFs, technical papers, or stacks of research materials, often delivering more consistent depth and structured reasoning. It is particularly strong at maintaining complex logical arguments across long responses.

Gemini, on the other hand, has native, always-available Google Search integration, giving it a clear advantage for current events, market research, and fact-finding that requires up-to-date information. When you need data from the past week or breaking news, Gemini can pull verified results directly. Additionally, its native multimodal capabilities make it more effective at analyzing charts, extracting insights from screenshots, and reasoning over visual context.

Pricing

Both platforms offer free tiers for experimentation and paid plans for serious usage.

Claude's pricing: free users have access to Sonnet 4.6 and Opus 4.5, but with usage limits. Claude Pro ($20/month) unlocks all models with higher limits and access to coding tools. Claude Max ($100-200/month) offers 5-20× the Pro usage and is suitable if you rely on it for heavy work, big projects, or advanced features. For teams, plans start at $25/user/month with options for premium developer seats at $150/user/month. For API usage, Claude charges around $5 per million input tokens and $25 per million output tokens for Opus 4.6 (smaller models like Sonnet are cheaper).

Gemini's pricing: free tier includes limited access to Gemini 3.1 Pro plus generous access to the faster 3 Flash model. Gemini Plus ($7.99/month) offers increased limits and basic Workspace features. Gemini Pro ($19.99/month) adds full access to Gemini 3.1 Pro, deeper Workspace integration, and better image/video generation credits. Gemini's Ultra tier ($249.99/month) targets users needing cutting-edge capabilities. Gemini's API pricing is significantly more affordable than Claude’s flagship: roughly $2 per million input tokens and $12 per million output tokens (for contexts up to 200K tokens; rates roughly double beyond that). The lighter Flash model is even cheaper. Google also offers Context Caching, which can reduce costs dramatically (often 70–90%) for repeated use of large reference documents or codebases. As Gemini uses a credit system for some generation features (images/video), and context caching, a direct “per-token” comparison with Claude can be tricky due to their different billing models.

So Which Should You Use? Claude or Gemini?

The default instinct is to ask which model is better. That’s the wrong question. What actually matters is where each model is more useful under real workload.

If you’re working on software, the difference shows up quickly. Generating code is easy. Most models can do that. The harder part is everything that comes after: refactoring, debugging, making changes across multiple files without introducing new issues. This is where Claude tends to hold up better. It keeps track of intent across a codebase and makes fewer mistakes as complexity increases. Gemini is still a strong option, especially if your workflow already lives inside Google Cloud. It integrates directly into that environment, which removes friction. For teams already using that stack, that matters more than marginal differences in model behavior.

For writing, the gap isn’t about sounding better. Most models can produce clean text. The difference is consistency over time. Claude is more reliable when the output gets longer or more structured. Documentation, technical content, anything that builds on itself over thousands of words - it tends to stay aligned without drifting off course. Gemini is more useful when the content depends on what’s happening right now. If you’re writing about current events, pulling in fresh information, or combining text with images or video concepts, it has a clear advantage.

For research and analysis, the distinction is also straightforward. Claude works better when you already have the material. Give it a set of documents, reports, or datasets, and it will process them as a coherent whole. The output is usually more structured and consistent. Gemini is stronger when the information needs to be gathered first. Its ability to pull and synthesize data from multiple live sources makes it more useful for tracking fast-moving topics.

In business environments, the decision is less about output quality and more about where the model fits. Claude is more flexible from an infrastructure standpoint. It can be deployed in controlled environments, integrated into internal systems, and configured in a way that gives teams tighter control over data. That’s why many companies run it through AWS Bedrock, especially when compliance is a concern. Gemini takes a different approach. It’s deeply embedded into Google Workspace - Gmail, Docs, Sheets. If your team already operates there, the productivity gains are immediate. You’re not introducing a new tool. You’re extending the ones people already use.

It becomes even more important in regulated industries. It’s not just about what the model can do. It’s about where it runs and how data is handled. Claude is generally easier to deploy in environments where data control is strict, including setups like AWS GovCloud. That makes it a more natural fit for healthcare, finance, and government use cases. Gemini can be used in those scenarios, but it typically requires more careful configuration to meet the same constraints.

So the choice isn’t really Claude vs. Gemini. It’s whether your work depends more on maintaining context and working through complexity or accessing live information and integrating into an existing ecosystem. Once you frame it that way, the decision becomes much more obvious.

Final Thoughts

Benchmark scores are a starting point, not an answer. The model that tops a leaderboard this month isn't automatically the right tool for your codebase, your writing workflow, or your team's stack. What's actually happened is that Claude, Gemini, and other models like ChatGPT have diverged - in the same way programming languages did. Nobody seriously debates whether Python or Go is "better." You ask: better for what? The same question applies here. Claude is the careful, thorough one. Gemini is fast. The mistake is treating this as a permanent verdict. These models are shipping major updates every few weeks. Whatever is true today may not be true in 90 days. The only evaluation that actually matters is running these models against your own work.